近幾個月來,新宣布的傑弗裡·愛潑斯坦案相關文件再次將科技億萬富翁比爾·蓋茲推向了聚光燈下。這些披露引發了激烈的公眾辯論,並重新審視了他過去的關聯。

愛潑斯坦檔案與日益增長的壓力

由於法庭文件涉及傑弗瑞愛潑斯坦不斷浮出水面,人們的注意力越來越集中比爾蓋茨,共同創辦人微軟。蓋茲曾經是世界首富,現在發現自己面臨令人不安的問題。

先前洩漏的照片顯示,蓋茲與兩名俄羅斯女性關係密切。有報道他與兩人都有不同的婚外關係。

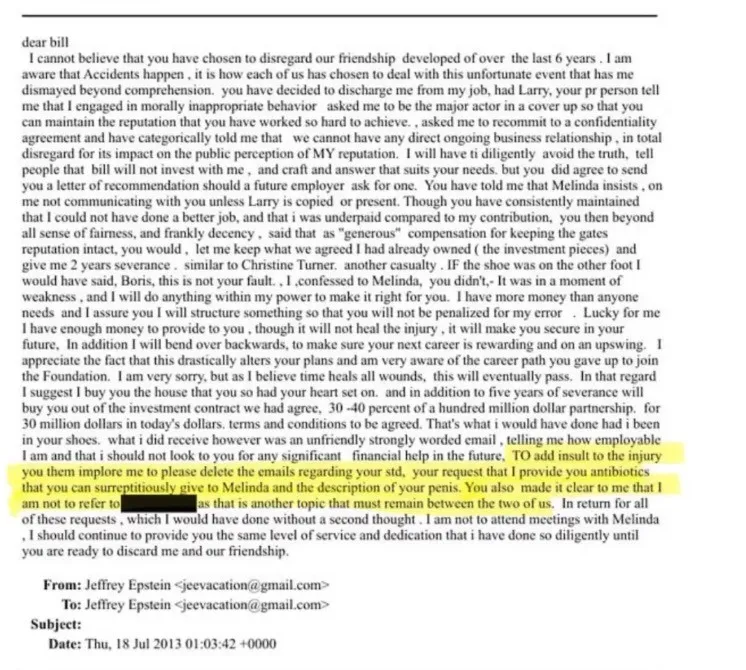

一些指控稱,這些女性是透過與愛潑斯坦有關的安排被介紹給蓋茲的。假定的目標是收集隨後可用於槓桿作用的妥協資訊。甚至有人聲稱,愛潑斯坦在尋求抗生素時在電子郵件中提到了蓋茲所謂的健康問題。這些指控迅速在媒體平台上傳播開來。

幾週以來,蓋茲一直保持沉默。

蓋茲基金會公開道歉

經過近一個月的沉默,蓋茲終於回應了這場爭議。他在一次內部會議上發表講話比爾和梅琳達蓋茲基金會,為他與愛潑斯坦的關係道歉,並澄清了有關他個人生活的細節。

蓋茲稱與愛潑斯坦的會面是一個「巨大的錯誤」。他承認他讓幾位基金會高層參加了與愛潑斯坦的會面。他向因他的決定而陷入困境的同事們表示歉意。

在討論俄羅斯女性時,蓋茲承認自己曾經有過兩次婚外情。他在一場比賽中認識了一位俄羅斯橋牌選手後,開始了一段戀情。另一起案件則涉及他透過商業活動認識的俄羅斯核物理學家。他強調,這兩個女人都不是愛潑斯坦的受害者。

他特別明確了一點。他堅稱自己從未與愛潑斯坦的任何受害者在一起。

米拉·安東諾娃和時間軸

媒體報導中提到的女性之一是俄羅斯橋牌選手米拉·安東諾娃(Mila Antonova)。據蓋茲稱,他是在 2009 年的一場橋牌錦標賽上認識她的。他們的關係在他遇見愛潑斯坦之前就已經發展起來。

蓋茲稱,他在2011年左右認識了愛潑斯坦。當時,愛潑斯坦已經因招攬未成年人賣淫而服刑。蓋茲聲稱他並不完全了解愛潑斯坦過去的細節。他表示已經進行了背景調查,他了解愛潑斯坦有旅行限制。

蓋茲表示,他們的談話主要圍繞著慈善事業和全球健康倡議。

他當時的妻子,梅琳達法國蓋茨據報道,他表達了對愛潑斯坦的擔憂。蓋茲承認他沒有足夠認真地對待這些擔憂。後來的事態發展證明她的擔心是有道理的。

勒索指控

由於蓋茲和愛潑斯坦之間的合作未能擴大,據稱愛潑斯坦改變了策略。有報導稱,2013 年,愛潑斯坦聯繫了安東諾娃。他提供了禮物和經濟援助,包括對程式設計課程的支持。

據稱,隨著時間的推移,愛潑斯坦對她與蓋茲的關係有了更多了解。在後來出現的電子郵件中,愛潑斯坦似乎暗示對蓋茲有影響力。

蓋茲一再否認曾造訪愛潑斯坦位於加勒比海的私人島嶼,媒體報導中也常提到這一點。他強調,他從未在那裡過夜,也沒有參與愛潑斯坦的犯罪行為。

他也表示,2014年之後他就與愛潑斯坦斷絕了聯繫。

Another Name Surfaces: Stephen Hawking

同時,另一位知名人物的名字又因與愛潑斯坦相關的文件而重新出現。已故理論物理學家史蒂芬·霍金再次成為媒體關注的焦點。

一張照片又開始流傳。照片中,霍金坐在兩名穿著比基尼的女性中間,微笑著。該圖像缺乏關於其日期和位置的明確背景。

霍金的家人很快就做出了回應。他們表示,這些婦女是來自英國的長期照護人員。據他們稱,這張照片是2006年在加勒比海聖托馬斯的麗思卡爾頓酒店拍攝的。據報道,在拍攝這張照片之前,霍金在科學會議上發表了關於量子宇宙學的演講。

由於愛潑斯坦也參加了那次會議,因此出現了關於這張照片後來如何出現在愛潑斯坦相關資料中的問題。霍金的家人拒絕回答有關情況的進一步問題。

有趣的是,這張圖片並不是全新的。早在 2012 年,它就已經在網路上流傳,包括在 Reddit 上,它被簡單地描述為度假快照。

公眾的質疑與懸而未決的問題

蓋茲最近的聲明闡述了他對事件的看法。在他的敘述中,他有過兩次婚外情,後來成為愛潑斯坦企圖操縱的目標。他堅決否認參與愛潑斯坦的犯罪活動。

公眾是否認為他的解釋令人信服仍不確定。

隨著更多文件的不斷出現,猜測依然存在。有些人認為,重新受到關注反映了媒體敘事的循環。其他人懷疑可能仍有未公開的細節等待浮出水面。

目前,整個故事仍然不完整,許多觀察家正在密切關注接下來會發生什麼。