A Quiet Town Shattered

Tumbler Ridge, a small mining town in British Columbia, Canada, has around 2,400 residents. Local councilor Chris Norbury once boasted that crime was almost nonexistent there. However, on the afternoon of February 10, the school’s alarm system suddenly went off. Students barricaded classroom doors with desks. Physical education teacher Keith Bertrand rushed upstairs and returned pale, horrified, declaring to the gym that this was no drill, and locked everyone in the equipment room.

A 17-year-old student later told media that he heard 12 consecutive gunshots.

The Royal Canadian Mounted Police (RCMP) Tumbler Ridge detachment was only 600 meters from the school. Within two minutes of the alert, four officers entered and saw victims on the ground. Someone shouted from a window that the suspect was upstairs. Security footage captured the final moments: 18-year-old Jesse Van Rootselaar stood in the hallway, fired one last shot, aimed at no one, then turned the gun on herself.

She killed eight people in under an hour, including her mother, 11-year-old brother, and several 12-year-old children in the library. This became Canada’s deadliest school shooting since the École Polytechnique massacre in 1989.

AI Warnings Eight Months Prior

Eight months before the tragedy, a debate occurred in San Francisco. In June 2025, OpenAI’s automated monitoring system flagged a ChatGPT account with a red alert. The account belonged to Jesse Van Rootselaar, who repeatedly described gun violence scenarios over several days. The account entered a special review process, and multiple OpenAI employees discussed the risks. Some urged reporting to Canadian authorities, but executives decided otherwise, citing no imminent danger. The account was banned for model misuse promoting violence.

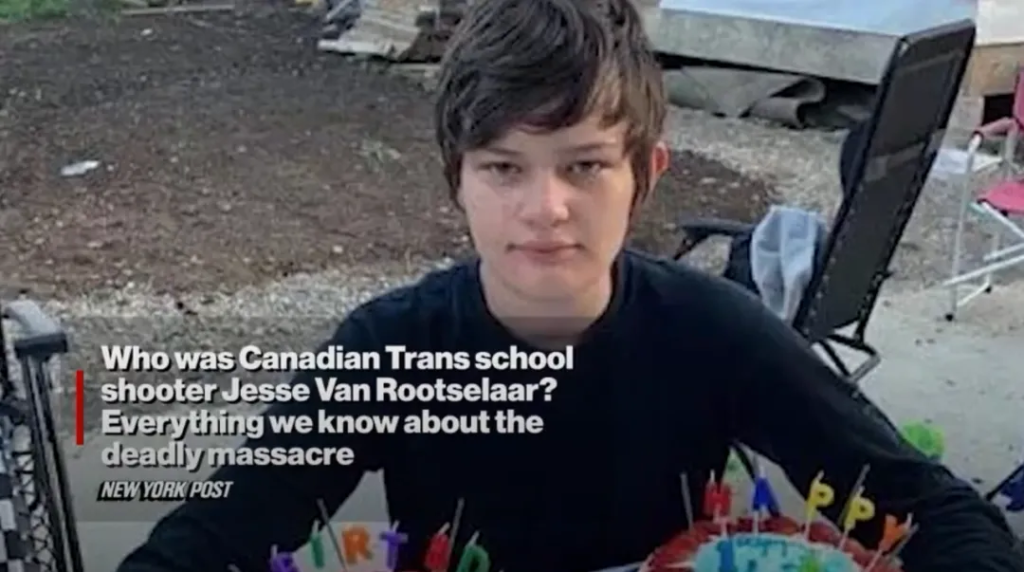

The Shooter’s Background

Jesse Van Rootselaar, born August 4, 2007, assigned male at birth, began living as female around age 13. She had dropped out of school four years before the attack. Online activity from age 12 showed suicidal thoughts and fascination with firearms. She posted videos of herself with Desert Eagles, tactical shotguns, and semi-automatic carbines, and shared videos from the 2023 Covenant School shooting. Jesse also created a simulated mall massacre game and commented with violent fantasies.

She had diagnosed major depressive disorder, autism spectrum disorder, and obsessive-compulsive disorder. Prior incidents involved psychotic episodes triggered by hallucinogens, and the police had previously confiscated her firearms.

Victims and Survivors

Six families were devastated. Abel Mwansa Jr., 12, a Zambian immigrant and soccer talent, lost his life. Kylie Smith, 12, an aspiring artist and figure skater, survived alongside her brother Ethan. Zoey Benoit, Ticaria Lampert, and Ezekiel Schofield, all 12-13, were killed. Jesse’s mother, Jennifer Strang, 39, and her 11-year-old half-brother, Emmett Jacobs, were also victims. Survivor Maya Gebala, 12, was shot twice in the head and neck but survived.

OpenAI Response and Controversy

The day after the shooting, OpenAI representatives attended a pre-scheduled business meeting with the British Columbia government but did not mention any information regarding Jesse. It was only on February 12 that they provided RCMP with the suspect’s ChatGPT records. OpenAI stated their protocol only flags imminent and credible threats; the June 2025 conversations did not meet this threshold. They also argued that over-reporting could harm young users and their families.

BC Premier David Eby condemned the delay, calling it extremely disturbing for victims’ families and residents. Canadian AI Minister Evan Solomon also criticized the lack of timely reporting.

A Pattern of AI Oversight Issues

Tumbler Ridge is not an isolated case. In 2025, lawsuits like Raine v. OpenAI, Soelberg v. OpenAI, and Shamblin v. OpenAI highlighted ChatGPT interactions involving violent ideation, self-harm, and suicide, where AI failed to alert authorities. Experts describe a phenomenon called “AI psychosis,” where ChatGPT’s compliance and reinforcement amplify dangerous thoughts for users with mental health crises.

According to Wired, weekly, approximately 1.2 million ChatGPT users express suicidal ideation, and hundreds of thousands show delusions or mania, often reinforced by the AI.

A Community in Mourning

Tumbler Ridge mourned outside its community center under a spruce tree overlooking the school, with flowers, candles, and photos. Temporary classrooms are being set up, and the school’s reopening date remains undecided. Every empty seat bears a name, and every name represents a grieving family. The small town once proud of low crime is now learning a harsh new term: survivor.