La forma en que los hombres coreanos conquistan el océano es feroz y decisiva. Evitan La teoría de la evolución y La Divina Comedia. Buscan la clave del dominio en las profundidades del océano. A través de prueba y error, han encontrado un atajo: desafiar directamente a Cthulhu. Este es un arte performativo que casi todos los hombres coreanos intentan al menos una vez.

¿Qué es el Sannakji?

Este plato, hecho con pulpo vivo, se llama Sannakji (산낙지). Los tentáculos suaves se arrastran sobre el borde del plato. Los ojos juguetones del pulpo parpadean, advirtiendo o atrayendo como una sirena. Incluso el hombre coreano más común no puede resistirse a este encanto.

“Es más inteligente que los humanos. Tiene ocho patas: suaves, pálidas. Atrae a los hombres hacia su agujero negro de deseo.”

“Por supuesto, no todos los hombres se rinden. Algunos ni siquiera logran pasar la entrada.”

Comer pulpo vivo: un ritual para los hombres coreanos

Comer pulpo vivo es un ritual único para los hombres coreanos. No todos disfrutan de este acto audaz. Algunos alegan alergias, mientras que otros temen la mirada del pulpo. Aquellos que superan la prueba poseen una empatía natural. No evitan la etiqueta. Son los reyes al sur del paralelo 38.

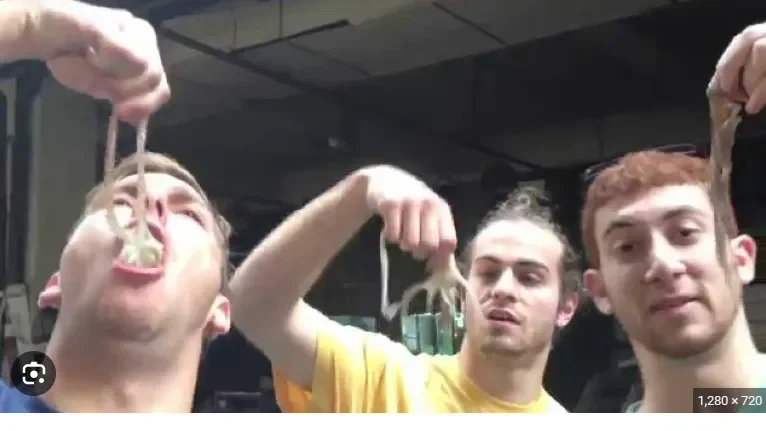

Este acto performativo rara vez se hace solo. Los hombres lo usan para impresionar a figuras importantes. Los turistas extranjeros que presencian este enfrentamiento pueden encontrarlo inolvidable. Es como tropezar con una cacería de leones Maasai en África. La imagen se graba profundamente en sus mentes.

El procedimiento estándar para comer pulpo vivo

Los hombres coreanos siguen pasos estrictos para comer pulpo vivo. Es como encontrar una llave antes de entrar a una casa. No puedes simplemente morder una cerradura como un extraño imprudente. Esta práctica se está convirtiendo en una exportación cultural.

En Corea, los cobardes usan palillos. Los hombres de verdad agarran al pulpo por la garganta. Dejan que sus tentáculos se agiten salvajemente. En el momento adecuado, lo meten en sus bocas.

“Tal vez así no recordará quién lo mordió.”

El verdadero desafío comienza después de que el pulpo entra en la boca.

La venganza del pulpo y el deseo de conquista de los hombres coreanos

El pulpo contraataca. Incluso un ratón busca venganza cuando está exhausto. Sus tentáculos luchan bajo la saliva humana. Para los hombres coreanos, se siente como una lengua delicada succionando y exhalando. Esto alimenta su deseo de conquista.

Los comedores expertos usan sus incisivos para separar el cuerpo del pulpo de sus tentáculos. Mastican ferozmente con sus molares. La lengua humana se lanza, sometiendo al pulpo en unos pocos movimientos. Después de tragar la cabeza, abordan las patas.

“Comerlo al revés es arriesgado.”

“El pulpo te mira mientras muerdes sus patas. Lo que sucede después es impredecible.”

La técnica y filosofía de comer pulpo vivo

El proceso requiere habilidad. Comer pulpo vivo no puede apresurarse ni retrasarse. Se trata de equilibrio: nueve mordidas superficiales, una profunda. Algunos hombres coreanos saborean las mareas del océano y el orgullo nacional en el pulpo. Es la prueba definitiva de valentía.

Algunos comensales intentan rastrear la valentía de los hombres coreanos a través del sabor. Marinan el pulpo vivo en una enzima similar a la saliva. Lo venden en segmentos a turistas curiosos. Pero a los hombres coreanos les importa más la lucha que el cadáver frío.

El simbolismo del pulpo en el cine coreano

Esta experiencia impactante se refleja en las películas. En Oldboy, dirigida por Park Chan-wook, el protagonista Choi Min-sik dice: “Te haré pedazos. Nadie encontrará tu cuerpo. Me lo tragaré todo.” La escena en la que come un pulpo vivo es icónica.

“Después de comer, la persona se desmaya.”

En Early Works, un director de cine y un actor comen un pulpo vivo diariamente como desafío. Ganan 10,000 wones por pulpo. La película generó controversia.

El desafío del pulpo es un rito de paso. Cuando los personajes comen pulpo vivo, su yo interior se transforma.

En The Handmaiden, el pulpo simboliza “deseos perversos” y “sexualidad dominada por hombres.”

El atractivo cultural y los riesgos del pulpo

El pulpo tiene un atractivo único para los hombres coreanos. Es difícil imaginar que esta criatura simple se convierta en un adversario de por vida.

Hace un millón de años, el Homo erectus cazaba tigres dientes de sable. Hace diez mil años, el Homo sapiens cruzó el estrecho de Bering. Hoy, los hombres coreanos conquistan pulpos en las mesas de comedor.

Rechazar el pulpo vivo en actividades grupales es como rechazar la propuesta de un emperador. Algunos coreanos abrazan este desafío. Los influencers comen pulpo vivo por vistas.

“Cuando el pulpo bloquea la boca del influencer, las vistas se disparan.”

El artista japonés Katsushika Hokusai representó pulpos con connotaciones sexuales. Solo Tang Yin lo igualó. El pulpo se convirtió en un símbolo de poder.

“El pulpo está más cerca del origen de la vida. Encarna los demonios internos de los hombres.”

Comer pulpo vivo en público destruye la timidez. Envuelve a los hombres en la intrepidez.

Sin embargo, algunos no logran dominar la técnica. Se ahogan o mueren. “Los pulpos se aferran al esófago, comprimiendo la tráquea.”

Esta es la venganza del pulpo. Aunque es raro, genera preocupación.

“Oye, Jim, hemos desarrollado una nueva forma: masajear los tentáculos en sentido horario para adormecerlo.”